The Last Media Buyer

I'm at about 12,000 feet in the Caucasus Mountains in Georgia. The country, not the state. I get asked every single time.

My son is two and a half. He's napping. My wife is pregnant with our second. I spent this morning snowboarding and I'll spend this afternoon reviewing ad performance data for a business that manages millions in spend.

If that sounds like a weird combination, it is. Running a performance marketing agency is supposed to be a 60 hour a week, glued to your laptop kind of existence. For most people in this industry it still is. Three years ago it was for me too. I was the guy on the Monday morning panic call explaining why CPA went up 11% while silently wondering if it was actually even a problem.

That version of the job is dying. And the thing that's killing it is the same thing that emptied out the Wall Street trading floors 20 years ago. Almost nobody in media buying is talking about this honestly because it's scary and it means most of what we do every day doesn't matter nearly as much as we think it does.

Let's start with a little history.

The Wall Street Thing

I use this analogy all the time and I'm going to use it again because nothing else explains it as cleanly.

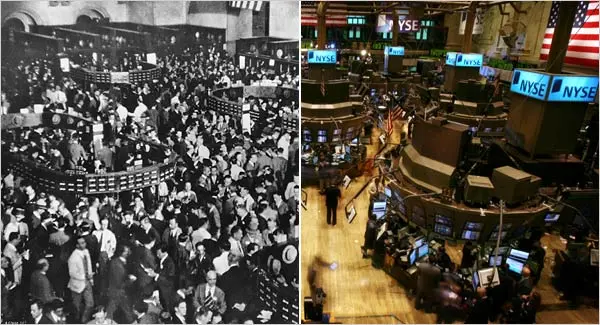

Go look at photos of the New York Stock Exchange trading floor in 1990. It's pure chaos. Dudes in colored jackets screaming prices at each other, hand signals flying, million-dollar decisions being made based on gut feel and whatever someone overheard in the bathroom. Just an auction market run entirely by humans.

Now look at it in 2025. It's a museum. The few people still on the floor are basically there for CNBC. The real trading happens in server farms in New Jersey. Algorithms executing in microseconds.

The thing people miss about that transition is that it didn't happen because algorithms were smarter than the traders. Not at first. It happened because they were more consistent. They didn't have bad Mondays. They didn't get emotional after a losing streak. They didn't take two weeks off to go to Europe. They just ran the system. All day. Every day.

Then a fund called Renaissance Technologies came along and did something else entirely. They didn't just automate execution. They built proprietary data infrastructure from scratch. Hired quantitative analysts. Created models that could find patterns no human would ever spot. They didn't replace the trader. They replaced the entire concept of trading.

Their returns were insane. 66% annual. Sustained. For decades. While everyone else was fighting over 11% from the S&P 500.

Now think about what Meta is building right now. Advantage+, AI creative tools, automated campaign management. They are literally handing everyone the index fund of advertising. Give us your product, give us your budget, we handle the rest. And it'll work pretty well. For everyone. Equally.

That's the problem.

If your competitive advantage is that you're good at using Meta's tools, you have the same edge as someone who's really good at buying index funds. Which is zero edge.

We're trying to build the Renaissance fund.

Every Dashboard You Use Is Lying to You

Something happens at basically every e-commerce brand every Monday morning and it drives me insane.

Media buyer opens Triple Whale. Or Northbeam. Or just Ads Manager. Looks at last week's CPA. Compares it to the week before. CPA went up. Panic sets in. They start changing stuff. New creatives, new audiences, budgets get shifted around.

Nobody stops to ask the obvious question. Was last week actually bad?

This is what made me crazy enough to start building our own system. Every single performance tool in this industry gives you time comparisons. This week versus last week. This month versus last month. It seems useful because numbers going up equals bad and numbers going down equals good. Simple.

Except it's completely delusional.

What if your comparison period has Q4 holiday data baked in? Now you're comparing a random Tuesday in February against Black Friday week. What if a competitor pulled $50K a day in spend from the same auction, which made your costs artificially low two weeks ago? What if you launched 20 creatives last week and only 10 this week and the volume difference alone explains the CPA change?

Time comparisons tell you what happened. They don't tell you what should have happened. Every single decision you make based on a broken comparison just compounds the problem.

That media buyer who panicked on Monday? They probably would have been fine doing absolutely nothing. Performance was within normal range for the system. But they had no way to know that. So they started tweaking things. Which introduced new variables. Which actually made performance worse. Which made them panic even harder on Tuesday.

I've watched this exact cycle destroy campaigns. Destroy client relationships. Destroy entire agencies. Millions of dollars moved around because of vibes.

What you actually need is something closer to a Z-score. A way to measure how far today's performance deviates from what's normal given your actual conditions. Not "is this better than last Tuesday" but "is this within the expected range of how our specific system performs right now." Those are completely different questions and almost nobody is asking the second one.

SKU-Level Is the Only Level That Matters

This is where I want to get specific because the general "AI is changing marketing" conversation is useless. Everyone knows AI is changing marketing. The question is how. And the answer is way more boring and way more important than people want to hear.

We work with a fitness brand that does nine figures. They have 75 SKUs. Their hero product consumes something like 95% market share in its category. It prints money.

But they also sell four or five other product categories. And when you look at the brand-level dashboard, everything looks fine. Average CPA is acceptable. Growth looks healthy. No problems here.

Except "brand-level" is a complete lie. It's like averaging your stock portfolio. Your Amazon position is up 40% and your speculative crypto play is down 80% and the average tells you everything is fine. Everything is not fine. You have one thing working great and one thing hemorrhaging cash and the average is hiding both.

When we broke this brand down to the SKU level the picture was totally different. Hero product: $32 CPA, unlimited scale, basically a money printer. Secondary category: $85 CPA, inconsistent, burning cash. Same brand. Same team. Same creative approach being applied to both of them.

That's the actual problem. Most agencies and most brands treat their product catalog like it's one thing. They find a creative style that works for the best seller and slap it across everything else. But someone buying the hero product and someone buying the secondary product are completely different people. Different motivations. Different pain points. Different angles that resonate. You cannot just take your winning landing page and swap the product image and expect it to work.

We learned this the hard way. More than once.

So we built everything at the SKU level. Benchmarks per SKU. Creative performance per SKU. Landing page data per SKU. The system doesn't know or care what brand something belongs to. It only knows this specific product, sold to this specific type of person, using this specific message, at this specific cost. Is it working? Is it scaling? That's it.

Once you get that level of granularity, things start getting interesting.

The Creative Matrix

At the end of the day every ad is really just two things. A persona, which is who you're talking to. And an angle, which is what you're telling them. Mom with chronic back pain, promise of relief. Fitness guy, promise of gains. Budget-conscious shopper, promise of value. Everything else is just execution detail.

We built something we call the Creative Matrix. It maps every single creative we've ever run against its persona, its angle, and its SKU. Then we layer real performance data on top of all of it.

When it's working right you can see things like: for the hero product, the "pain relief for older adults" angle using testimonial-style creative gets a $38 CPA and scales to $500 a day. But "home fitness equipment" angle with comparison-style creative sits at $72 and caps out at $150 a day. Totally different results from the same product just by changing who you talk to and how.

But the really useful thing is the gaps. Our system flagged that one angle had been tested across 14 different creatives on the hero product but zero creatives had ever tested that same angle on a secondary product line. We call that an undisputed angle. There's evidence it works in adjacent products but nobody has ever validated it for this specific SKU.

No human came up with that insight in a brainstorm. The system surfaced it by pattern-matching across hundreds of categorized creatives. That is a completely different way to generate creative strategy than sitting in a Slack channel going "what should we try next."

We took it further and built something called Scale Score. It's basically a probability model. It takes the benchmark CPA for a given SKU, the depth of creative testing that's been done (how many angles and personas have been explored), the total volume of creatives deployed, and the distribution of performance across all of them. Then it outputs a number. An 85 means there's an 80 to 90% chance this will scale if you push budget behind it. A 22 means you need a lot more testing before you should trust it with real money.

For our fitness brand the hero products all sit at 80 to 90 scale scores. Validated. Proven. Push budget. The newer product lines sit at 20 to 30. Not because they're bad products. There just isn't enough data yet. Not enough angles tested. Not enough personas explored.

When scale score is low the system knows to go find more information. It pulls competitor research. Landing pages. Ad transcripts. Angles and personas that competitors are running right now. We specifically look at aggressive direct response advertisers because their data is clean. They don't have brand equity propping up mediocre creative. If their ads are still running, the creative actually works.

Low score triggers research. Research generates hypotheses. Hypotheses become new creatives. Creatives get tested. Performance data feeds back into the matrix. Score goes up. It's a loop. And the human's job increasingly becomes watching the loop run instead of running it.

The Part That Scares Me

I want to be real about where we actually are right now because most people in this space either oversell what they've built or won't admit how hard the transition really is.

We have a batch uploader. Every creative that goes into the system gets assigned an 18-digit unique ID. Our own tracking code, not Meta's naming conventions. That ID carries everything. The persona. The angle. The creative transcript. All of it tied back to first-party purchase data so we can see which ads actually drove new customer acquisitions. Not Meta's attribution model. Actual purchase data.

What this means in practice is that the ad account looks absolutely insane. It's just walls of numbers. No campaign names. No human-readable naming structure. If you opened it up cold you would have no idea what you were looking at. You'd have to query the system to understand any of it.

And that right there is the psychological barrier that kills most agencies who try to make this jump. You have to stop looking at the ad account directly. You have to trust the scores. You have to let the system upload creatives in batches, test them against a max CPA threshold, and automatically cycle through the hypothesis loop without you intervening.

The human stops being the decision-maker and starts being the observer.

I'll be straight with you. I'm not fully there myself yet. We're phasing into it. Some days I still want to go in and manually check things because the sense of control feels safe. It's the exact same reason most people can't stick with index fund investing. They want to pick individual stocks because the act of picking feels like being in control, even though the data says they'd be better off not touching anything.

But control is a delusion when you're operating at real scale. 75 SKUs across hundreds of creatives across constantly shifting auction dynamics across seasonal trends. I cannot process all of that. No media buyer can. The math is too big for a human brain to hold.

The machine has to do it. And you have to get comfortable letting it.

The Data Moat

This is the part of the conversation that no one posts about or wants to have regarding AI on marketing.

Everyone's excited that Claude can write ad copy and Midjourney can make images and Meta's AI can basically build your campaigns for you. And yeah. That's cool. It's also commodity intelligence. Everybody has access to the exact same tools.

You know what is not a commodity? Two years worth of structured team communication data fed into an intelligence layer. SKU-level benchmark scores refined over hundreds of thousands of dollars in actual spend. A creative matrix with real performance data mapped against real angles and real personas for specific products in specific categories.

You cannot replicate that by signing up for a SaaS tool. You cannot prompt your way to it. It is the result of time plus money plus systematic categorization, all compounding on top of each other. And here is the counterintuitive part. As the AI tools get better and more accessible, this proprietary data becomes more valuable, not less. Because better models can extract more signal from better data. But only if the data exists to feed them.

Back to the fund comparison. If everybody gets access to the same Bloomberg terminal, the terminal is not the edge. The edge is the proprietary data and the proprietary models you're running on top of it. That has always been true and it's not going to stop being true.

That's the real race right now. Not "who has the best AI tools." That question is basically answered, or it will be within 12 months. The race is who has been systematically collecting and structuring their performance data in a way that compounds over time.

We got serious about this roughly 11 months ago. Before that we went through a phase of trying to duct-tape a bunch of N8N automations and open source tools together. It was a disaster. We had 30 disconnected instances that couldn't talk to our core infrastructure at all. To make them communicate we would have had to write separate compiler code just to shuttle data between systems. We were building a pipeline to connect to another pipeline. It was stupid.

So we scrapped the whole thing and rebuilt on C# and BigQuery. Enterprise-grade data infrastructure. It cost more. It took longer. But now everything lives in one unified system and data flows cleanly between every module.

That decision felt painful at the time. It's the single biggest reason we're ahead of where most agencies are trying to get to. If you're sitting on 30 N8N instances right now with no real data layer underneath them, do yourself a favor and rethink the foundation. It'll save you six months of pain.

The 18-Month Window

Meta's endgame is obvious. Brands upload products and budgets. Meta handles everything else. Advantage+ is already eating most of the manual optimization work that media buyers do today. Within 18 to 24 months the basic AI-powered version of media buying is going to be genuinely good. Good enough that an average brand won't need a human buyer at all.

So if your value proposition is "I'm good at running Facebook ads," you have about 18 months of relevance left. The floor is rising fast. What used to require real expertise is going to require a credit card.

But the ceiling is rising even faster. The agencies and brands that build actual proprietary intelligence on top of what Meta provides will be operating at a level the platform's native AI simply cannot match. Not because their AI is smarter. Because their data is richer. Their context runs deeper. Their system understands the specific business in a way that a generic platform tool never will.

That is the divergence. In 18 months there will be two kinds of businesses in this space. The ones using Meta's AI, which is the index fund. And the ones running their own intelligence layer on top of Meta's AI, which is the quant fund. The first group is going to do fine. The second group is going to do dramatically, disproportionately better.

And the window matters because you cannot build this stuff retroactively. You can't go back and categorize two years of creative data after the fact. You can't manufacture benchmark scores from thin air. You can't fake institutional knowledge that was never captured. The intelligence compounds. Which means every month you spend not building it is a month where your future competitor is pulling further ahead.

What My Monday Actually Looks Like Now

Two years ago. I wake up. Open Ads Manager. Scroll through campaigns trying to get a feel for whether yesterday was a good day or a bad day. Not really sure. Open Slack. The client is already asking questions. Hop on a call to explain why CPA is up 15%, which honestly might not even be a real problem, but they're worried so now I'm worried. Spend three hours making manual adjustments to campaigns. Feel productive at the end of it. Actually accomplish very little that moved the needle.

Now. I wake up. Check the health score. It shows me a delta against our weighted benchmark. Today it's negative 0.3, which means slightly below expected performance. That's within normal range. No action needed. The system has flagged that one SKU hit an 85 scale score overnight, which means high confidence it'll keep scaling. It recommends bumping budget 40% and shows me exactly which creatives to push behind. Another SKU is sitting at 22, so the system pulled competitor research overnight and surfaced three angles we haven't tested yet. Creative briefs are already staged. Fifteen new creatives are queued for batch upload. By Friday the system will have categorized how they performed, updated all the scores, and queued the next round of tests.

I spent 45 minutes on all of that. Then I went snowboarding with my son.

I'm not saying this to show off. I'm saying it because this is where the entire industry is going. And the people who get there first are going to have a compounding advantage over everyone who's still sitting in front of dashboards trying to feel their way through it.

The Bet

The last media buyer is not going to be the person who is best at managing ad accounts. That job is going away. The last media buyer is going to be the person who built the system. Who put in the time and the money to create real data infrastructure. Real benchmarks. Real creative intelligence. And who had the discipline to step back and let the machine do what machines do better than people.

Everyone else is going to use Meta's AI and get Meta's returns. The index fund. It'll be fine. Just not exceptional.

I don't know about you but I didn't get into this to be fine.

I'm Ethan. I run Natura Labs and I'm building something called The Lab, which is a creative intelligence platform for performance marketing. You can find me at @thefieldprojects or in some mountain range or surf lineup somewhere.